GPT4All - What’s All The Hype About

Yes, you can now run a ChatGPT alternative on your PC or Mac, all thanks to GPT4All. It’s an open-source ecosystem of chatbots trained on massive collections of clean assistant data including code, stories, and dialogue, according to the official repo About section.

But is it any good? As it turns out, it’s nowhere near the sheer power ChatGPT has, but it’s still a usable alternative you can run locally without an Internet connection.

Still, it might be a good fit for those who want a ChatGPT-like solution available at all times, completely free of charge.

This article will show you how to install GPT4All on any machine, from Windows and Linux to Intel and ARM-based Macs, go through a couple of questions including Data Science code generation, and then finally compare it to ChatGPT.

Table of contents:

How to Install GPT4All on Your PC or Mac

To install GPT4All locally, you’ll have to follow a series of stupidly simple steps.

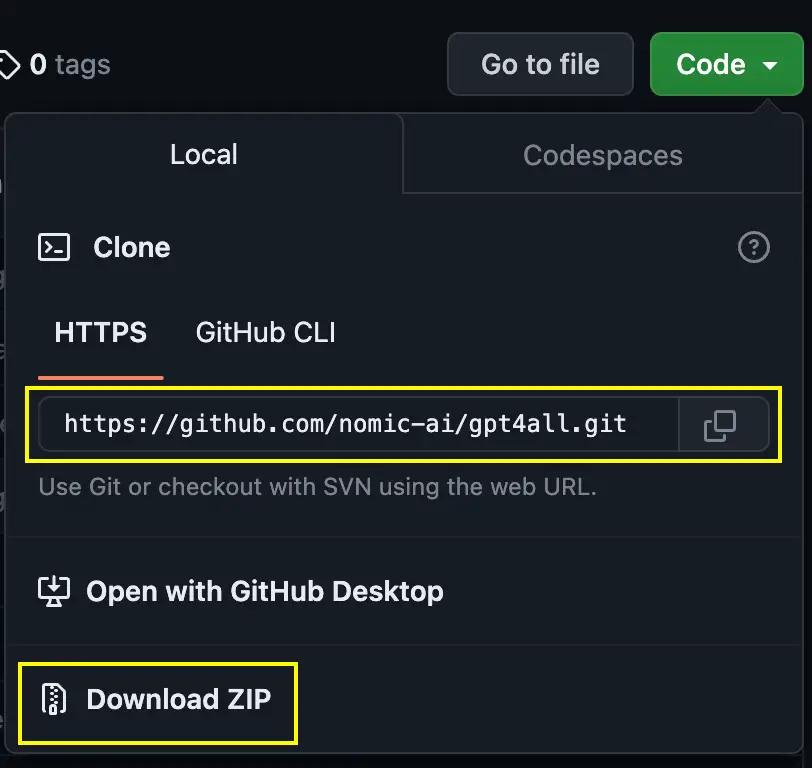

1. Clone the GitHub Repo

First, open the Official GitHub Repo page and click on green Code button:

Image 1 - Cloning the GitHub repo (image by author)

You can clone the repo by running this shell command:

git clone https://github.com/nomic-ai/gpt4all.git

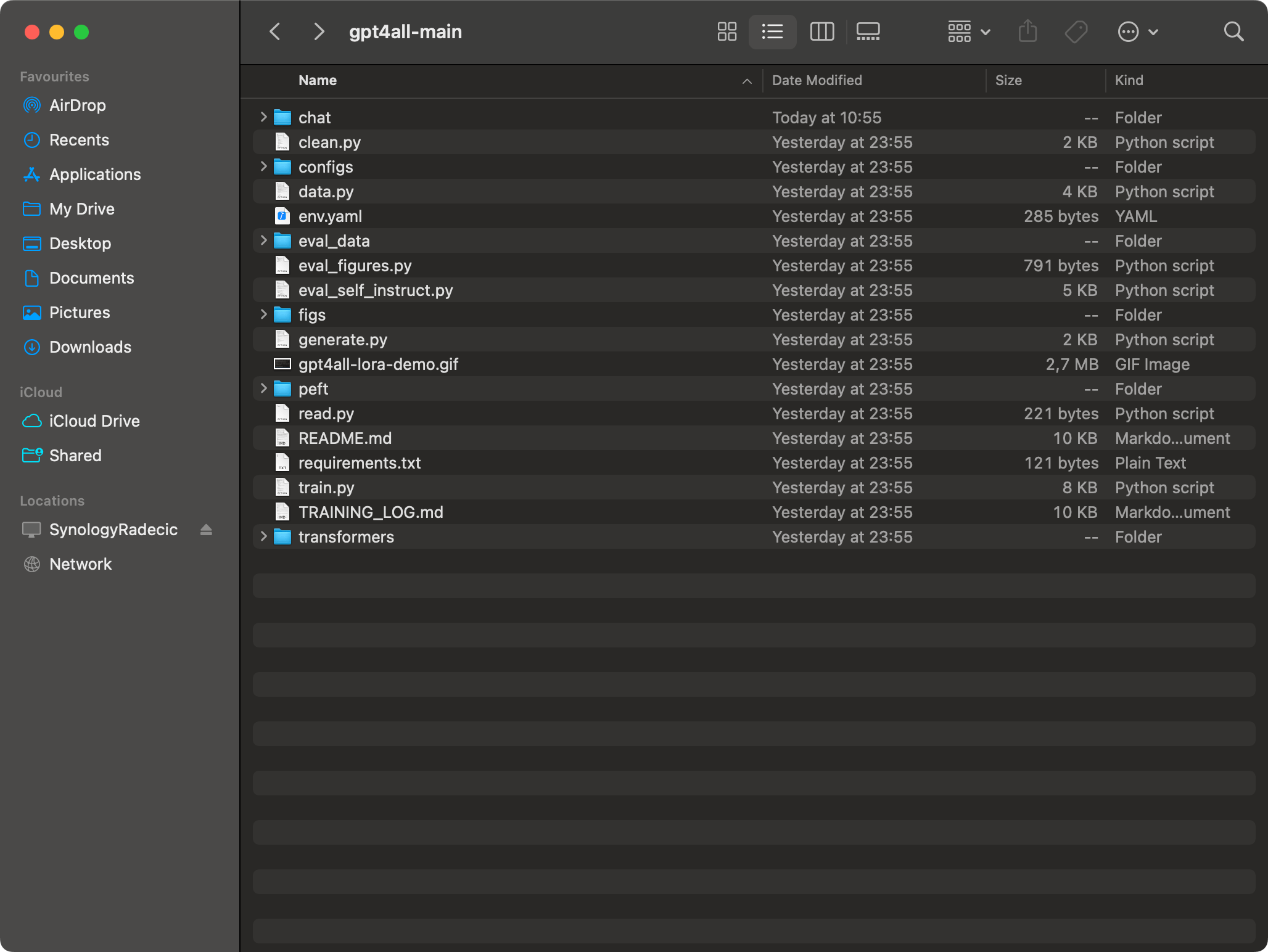

Or you can download the ZIP file and extract it wherever you want. Anyhow, here’s what you should see inside the folder:

Image 2 - Contents of the gpt4all-main folder (image by author)

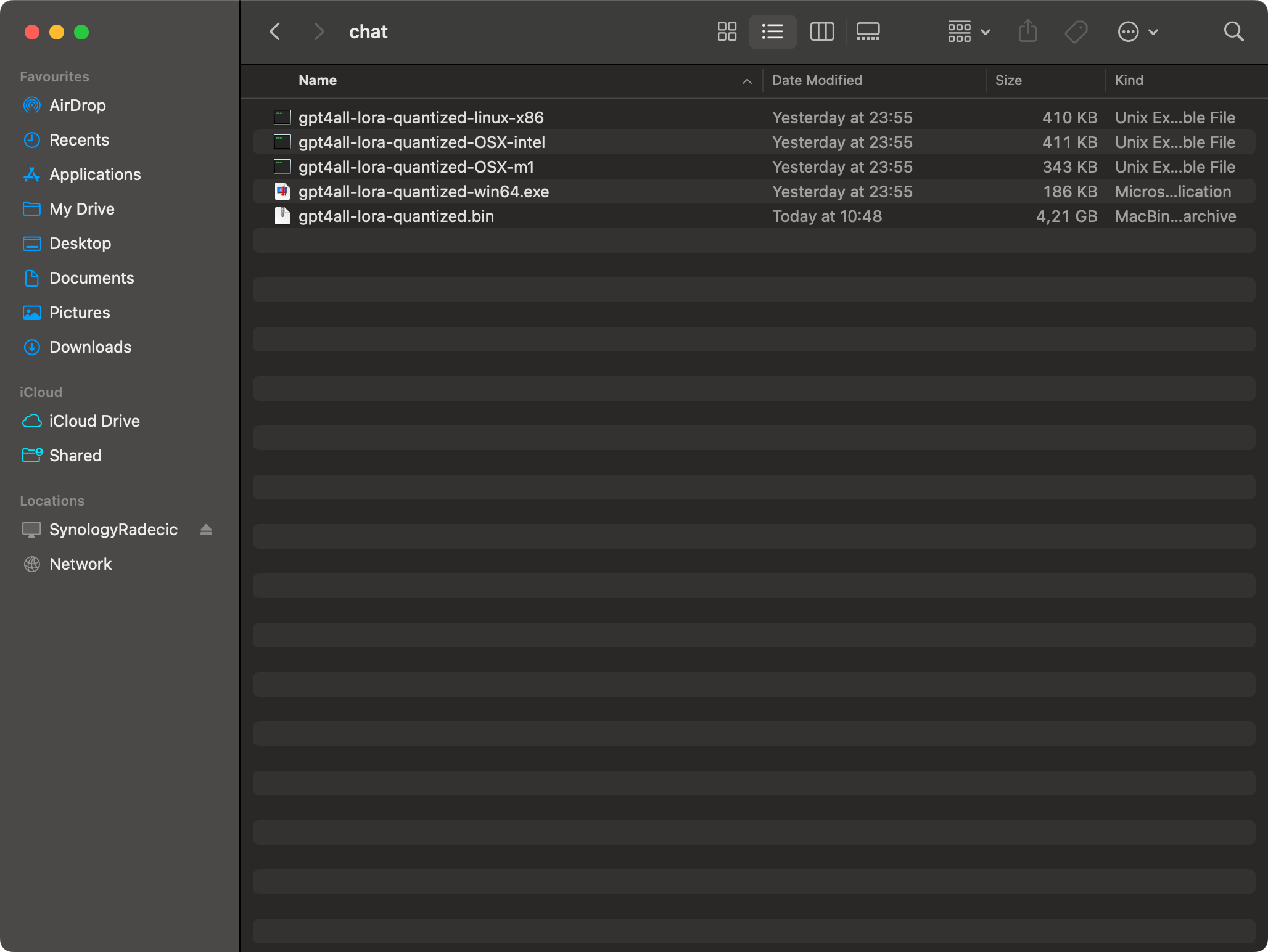

2. Download the BIN file

Assuming you have the repo cloned or downloaded to your machine, download the gpt4all-lora-quantized.bin file from the Direct Link.

The file is around 4GB in size, so be prepared to wait a bit if you don’t have the best Internet connection.

Once downloaded, move the file into gpt4all-main/chat folder:

Image 3 - GPT4All Bin file (image by author)

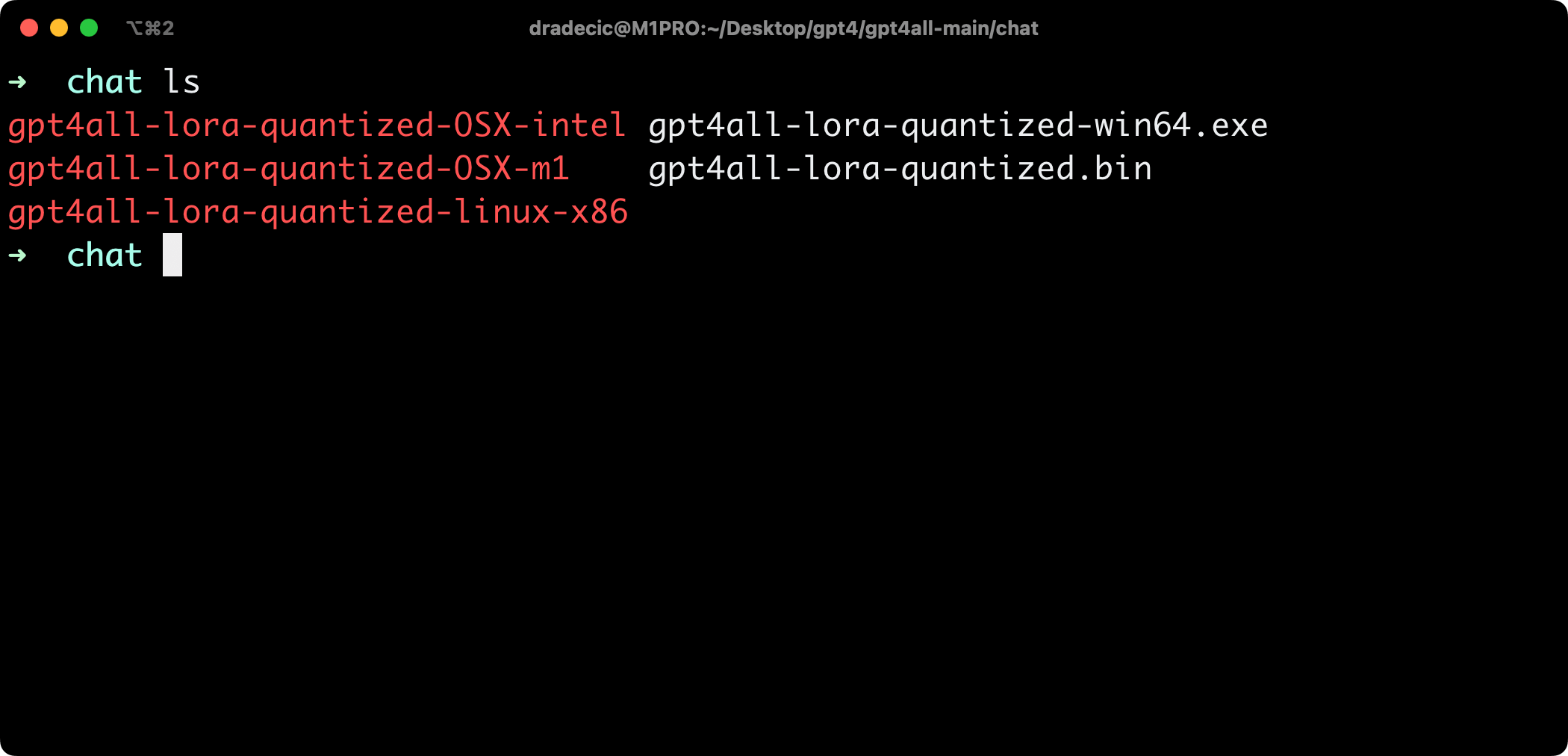

3. Run GPT4All from the Terminal

Open up Terminal (or PowerShell on Windows), and navigate to the chat folder:

cd gpt4all-main/chat

Image 4 - Contents of the /chat folder (image by author)

Run one of the following commands, depending on your operating system:

- Windows (PowerShell) -

./gpt4all-lora-quantized-win64.exe -m gpt4all-lora-unfiltered-quantized.bin - Linux -

./gpt4all-lora-quantized-linux-x86 -m gpt4all-lora-unfiltered-quantized.bin - Mac (Intel) -

./gpt4all-lora-quantized-OSX-intel -m gpt4all-lora-unfiltered-quantized.bin - Mac (M1/M2) -

./gpt4all-lora-quantized-OSX-m1 -m gpt4all-lora-unfiltered-quantized.bin

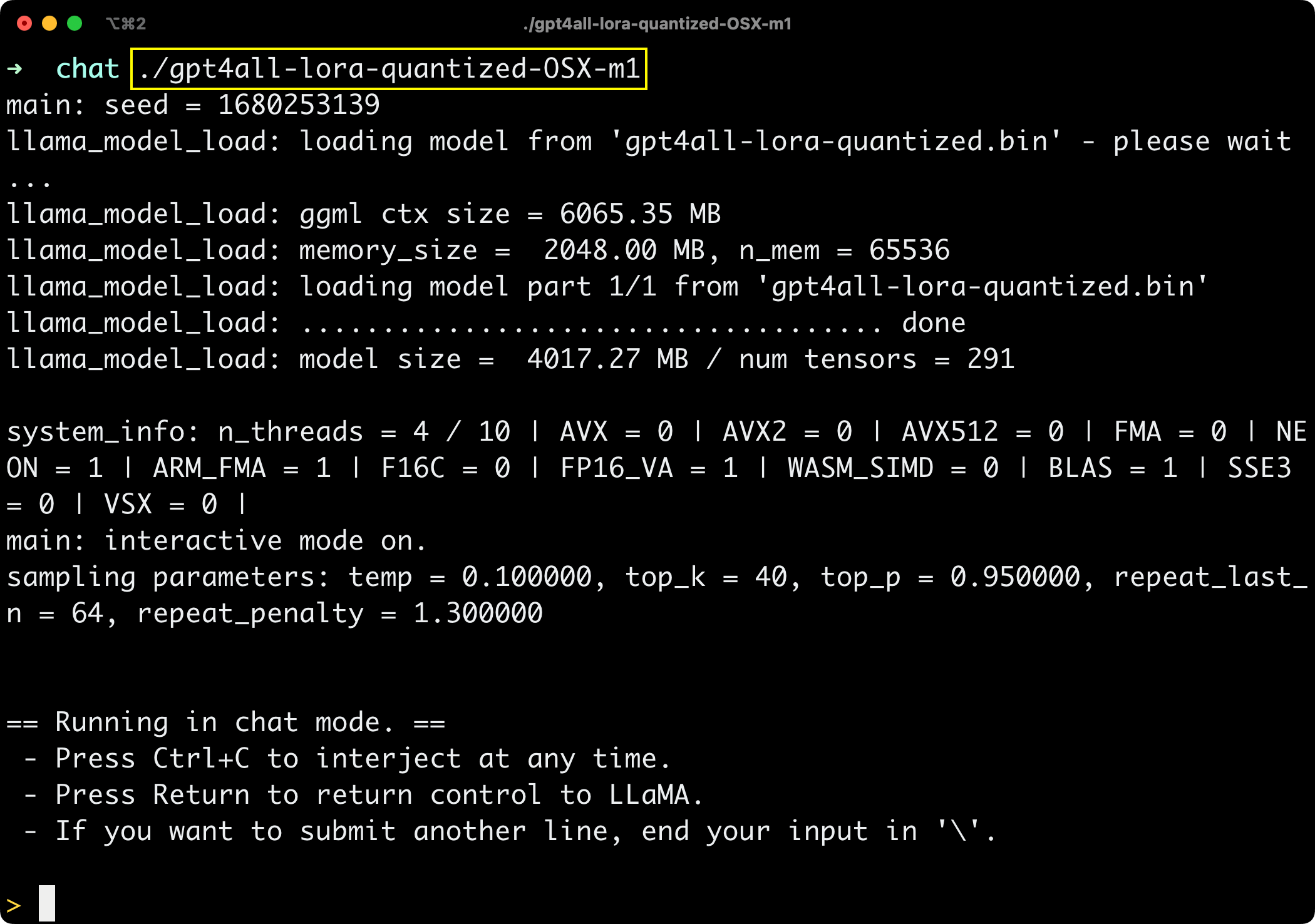

Here’s what you should see on your screen:

Image 5 - GPT4All window (image by author)

You’re in, so let’s see if GPT4All is any good next.

Testing out GPT4All - Is It Any Good?

I’ll now ask GPT4All a series of simple questions that I know ChatGPT would have no trouble answering.

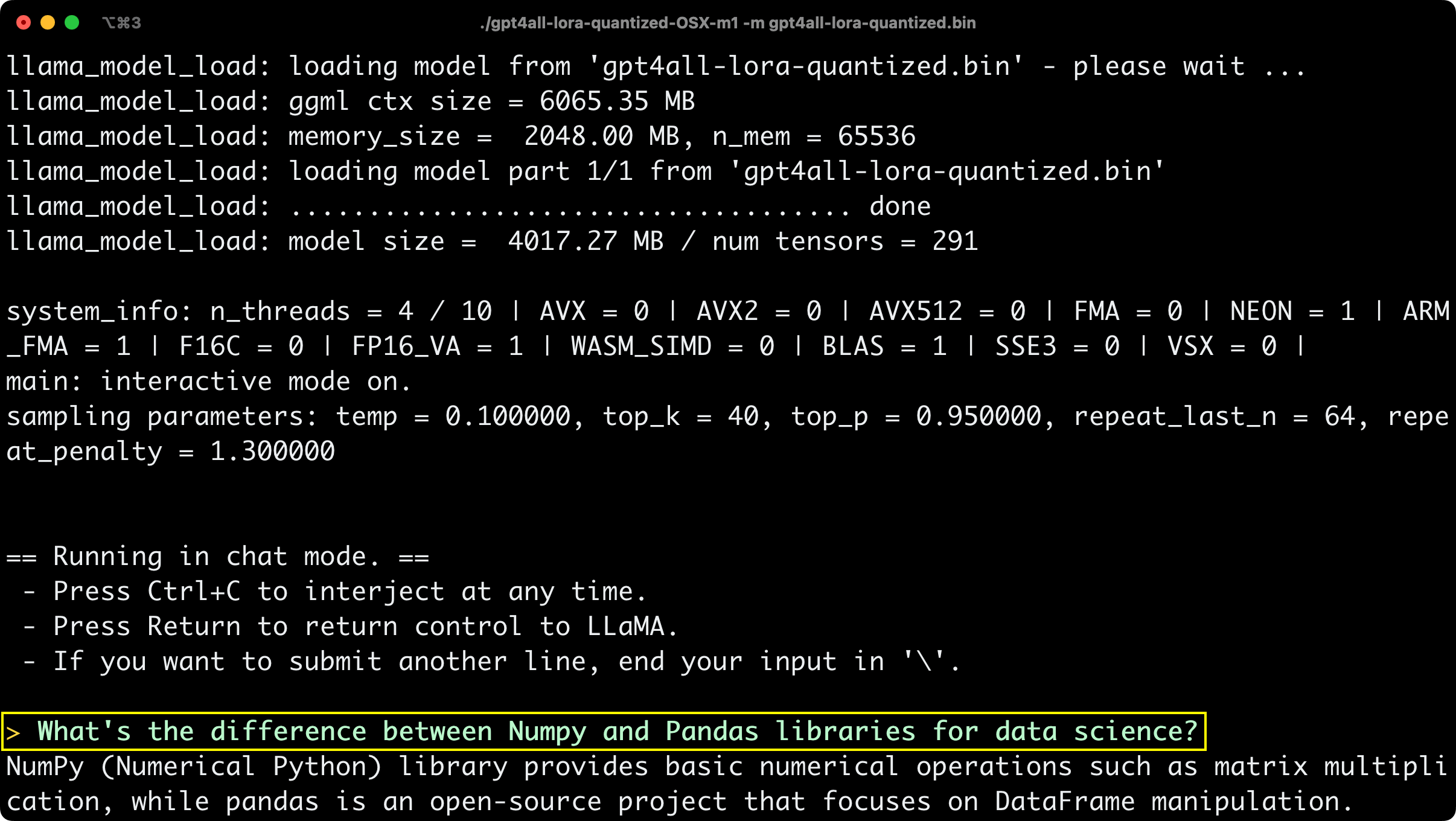

The first one is - What’s the difference between Numpy and Pandas libraries for data science?

Image 6 - GPT4All answer #1 (image by author)

The answer is technically correct but a bit vague, so let’s see if GPT4All can expand it a bit:

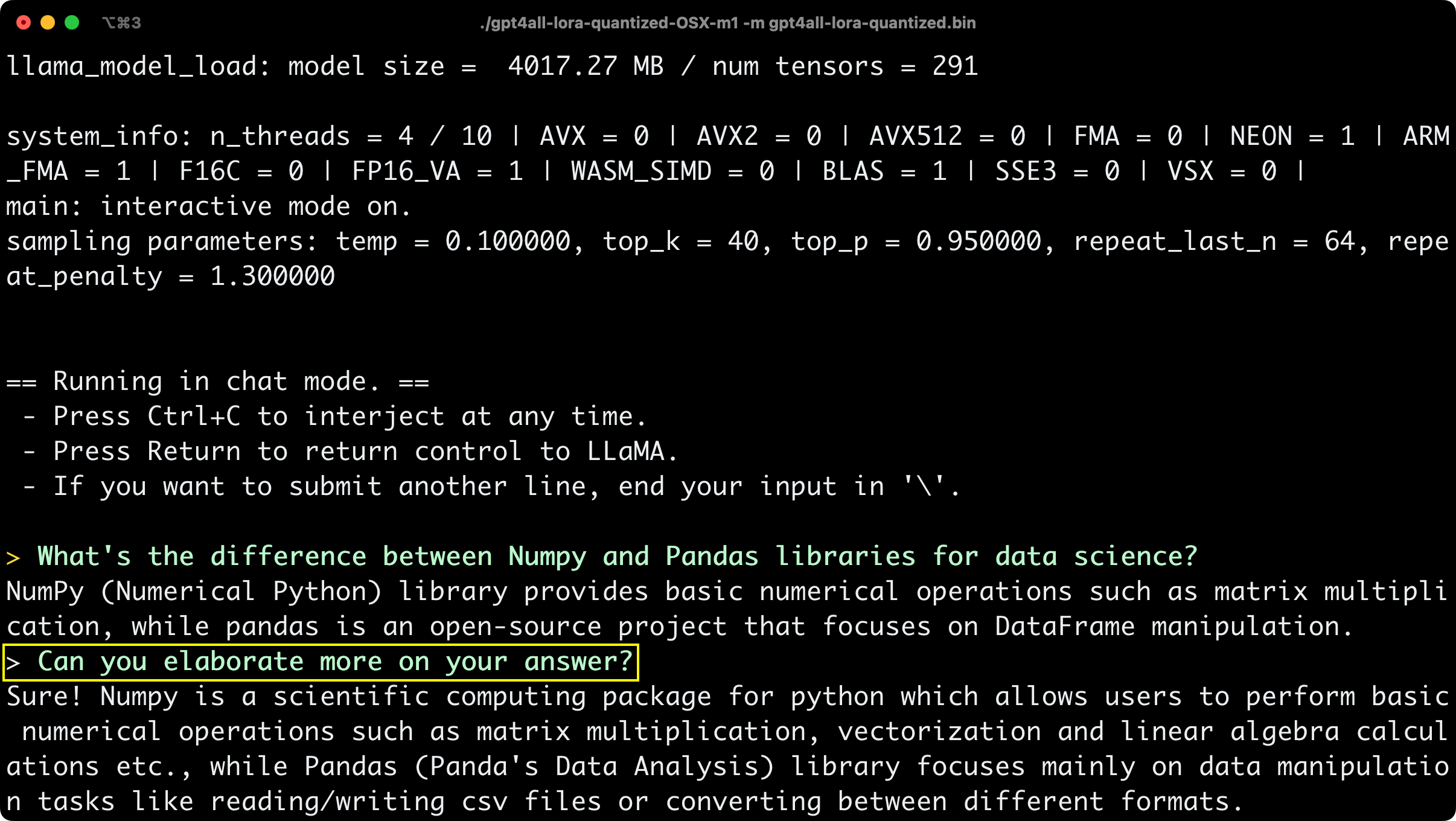

Image 7 - GPT4All answer #2 (image by author)

The answer is a bit longer now, but we’re accustomed to a couple of paragraph long explanations from ChatGPT, so this still feels a bit short and unpolished.

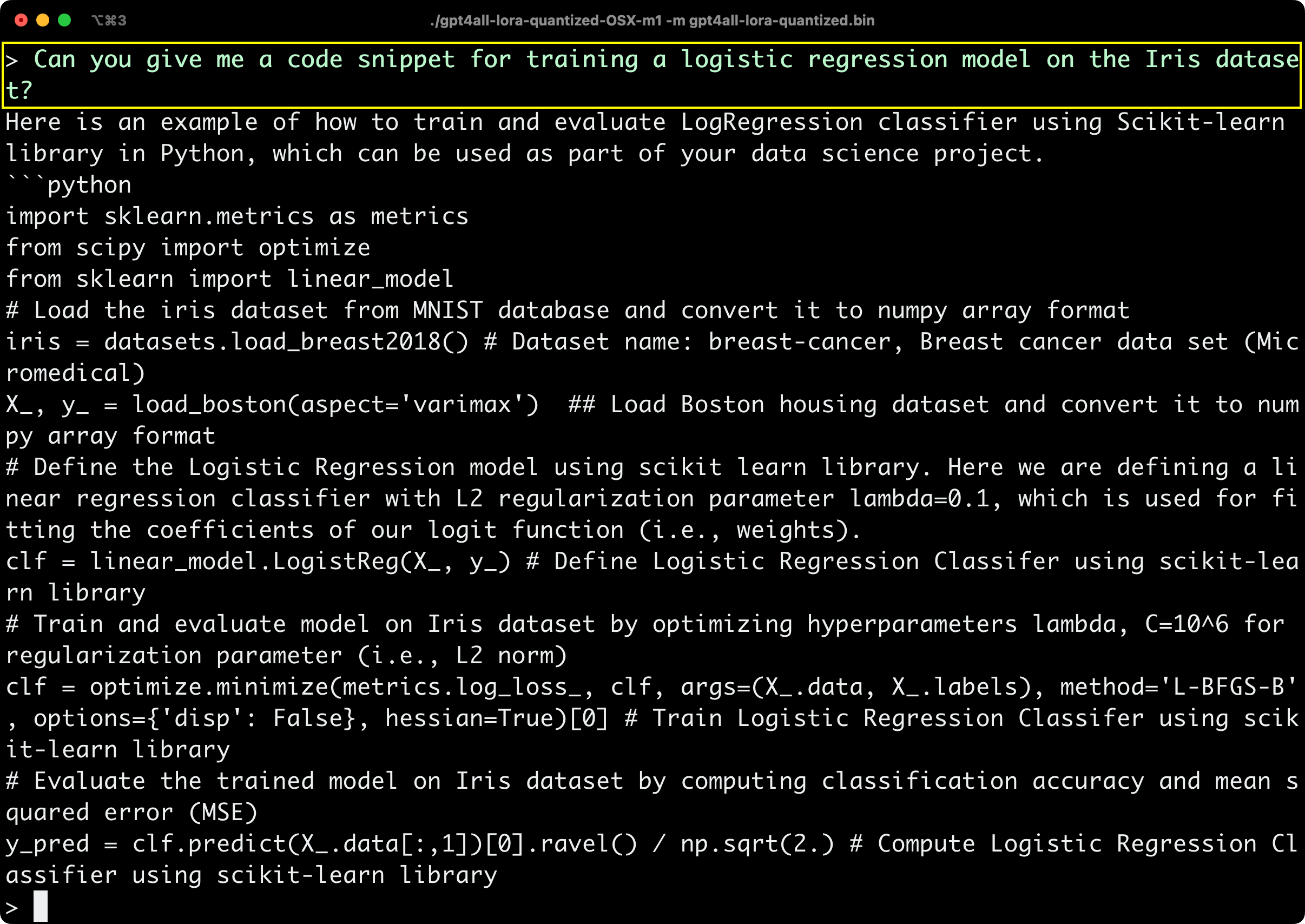

And now, Can GPT4All generate code? I’ve asked the following question - Can you give me a code snippet for training a logistic regression model on the Iris dataset?

Here’s what it came up with:

Image 8 - GPT4All answer #3 (image by author)

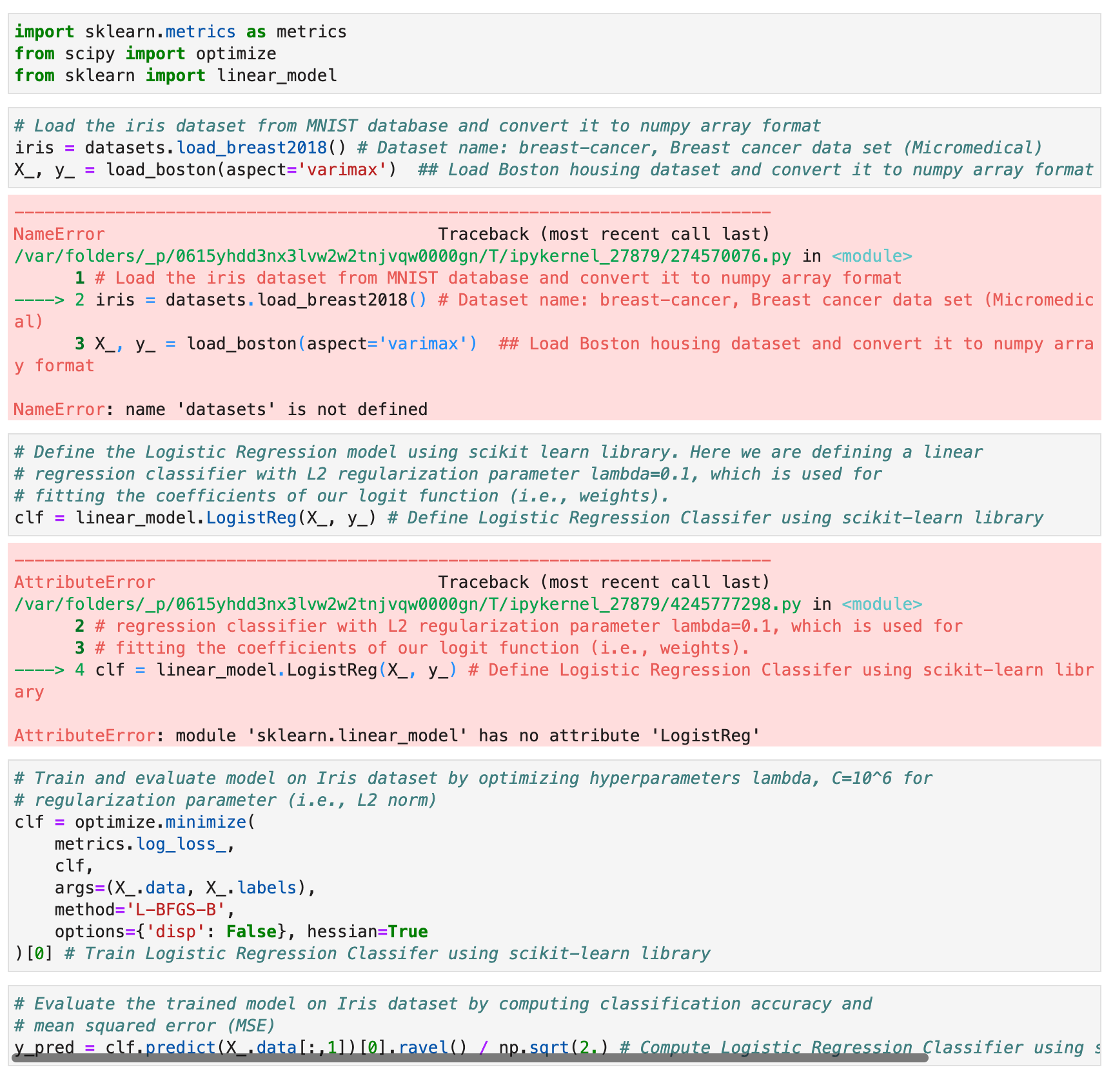

It’s a common question among data science beginners and is surely well documented online, but GPT4All gave something of a strange and incorrect answer. Let’s copy the code into Jupyter for better clarity:

Image 9 - GPT4All answer #3 in Jupyter (image by author)

GPT4All is on point with code comments, but everything else falls short. It’s loading the wrong dataset from a library that wasn’t even imported and then

GPT4All vs. ChatGPT - Who Wins?

I’ll now ask ChatGPT the same questions to compare the quality of answers.

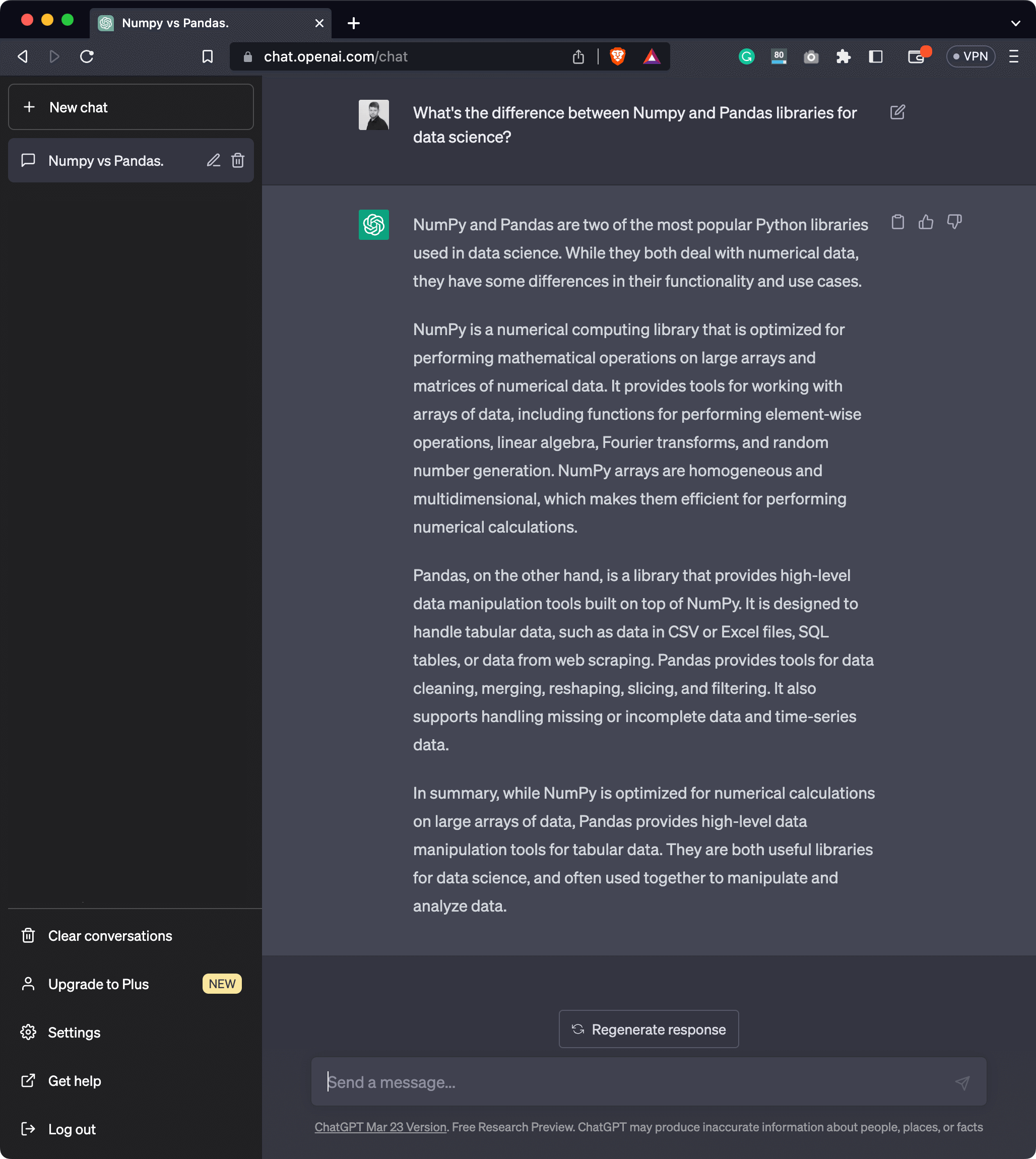

Let’s start with the first one - the difference between Numpy and Pandas libraries in the context of data science. Here’s the answer:

Image 10 - ChatGPT answer #1 (image by author)

Well, there’s no need to further elaborate on this. You can get a clear picture of the differences between these two libraries, and the generated response has more sense than the one returned by GPT4All.

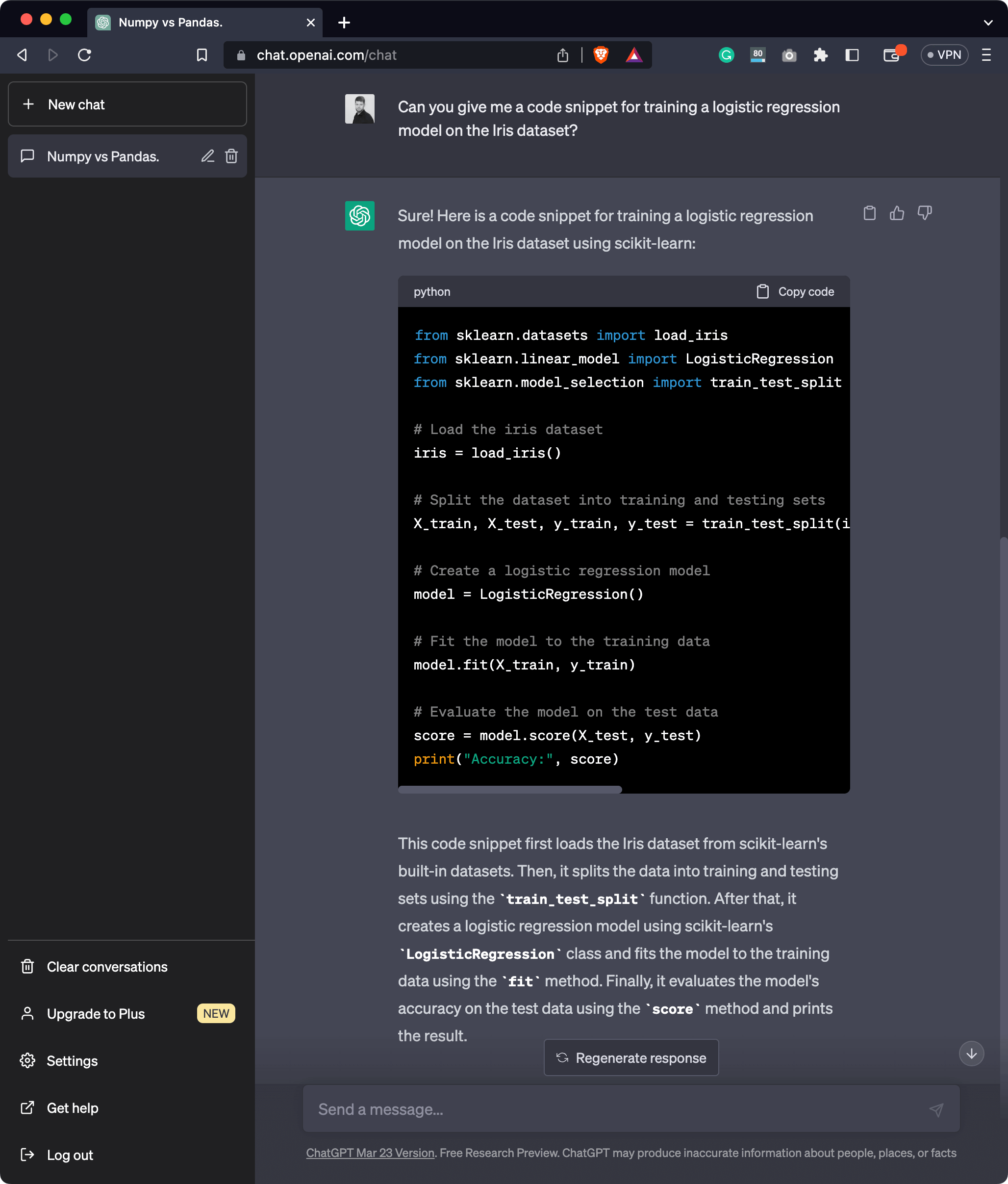

But now comes the big question - Can ChatGPT generate a 100% correct code? Let’s take a look:

Image 11 - ChatGPT answer #2 (image by author)

The code and explanations seem all right, but the only way to verify is to run the entire thing in Python:

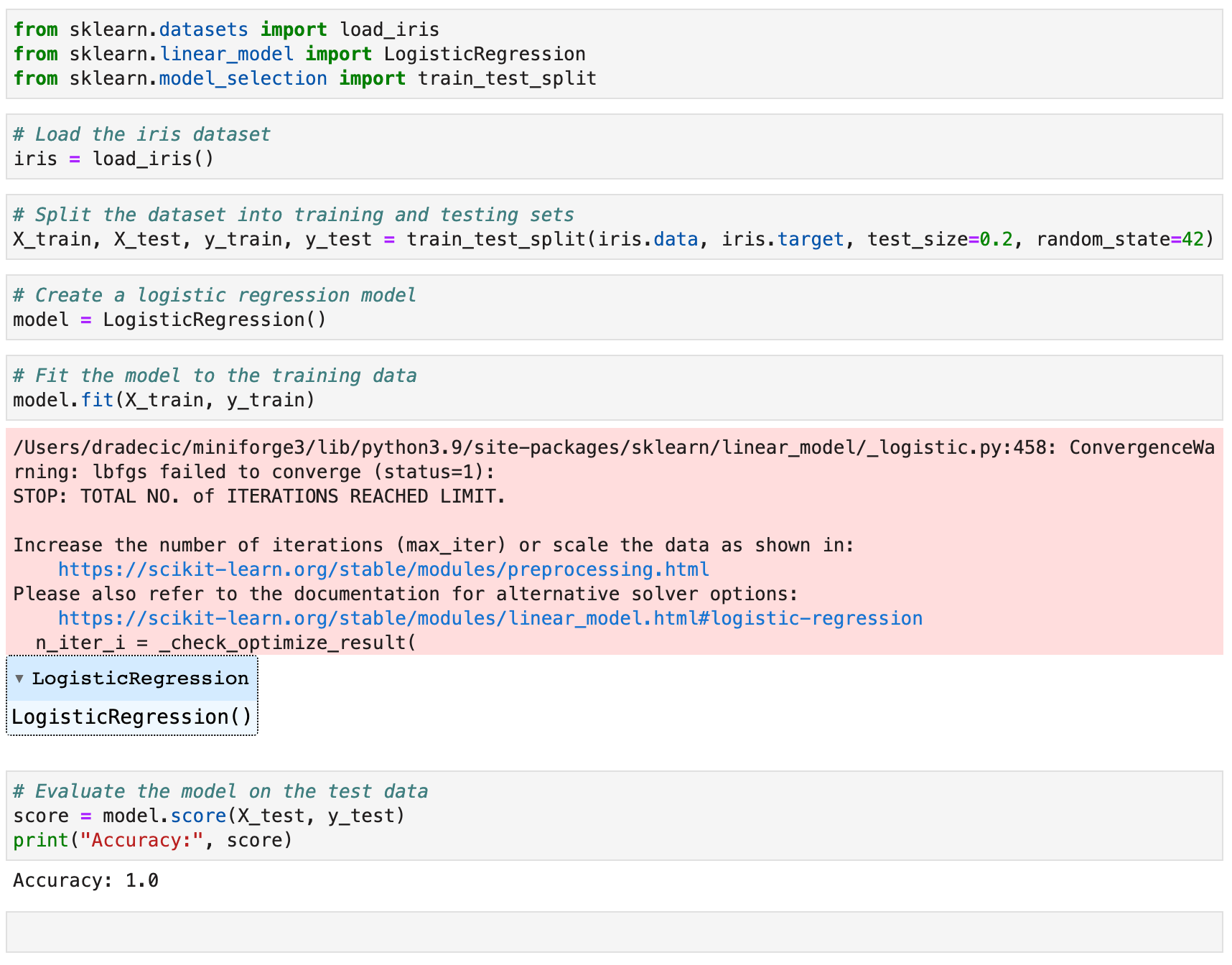

Image 12 - ChatGPT answer #2 in Jupyter (image by author)

There are no errors raised, and the code works as expected. It’s a simple example, sure, but it’s much faster than Googling for the answer.

Summing up GPT4All

There’s no denying that GPT4All is nowhere near ChatGPT, but that’s expected. It wouldn’t be free and open-source otherwise. Still, it’s a viable alternative and a free playground that runs locally on your system.

You can also use GPT4All through a Python interface, and that’s something we’ll look into in an upcoming article, so stay tuned to learn more.

What do you think of this open-source large language model? Do you see it catching up to ChatGPT in the near future? Let me know in the comment section below.